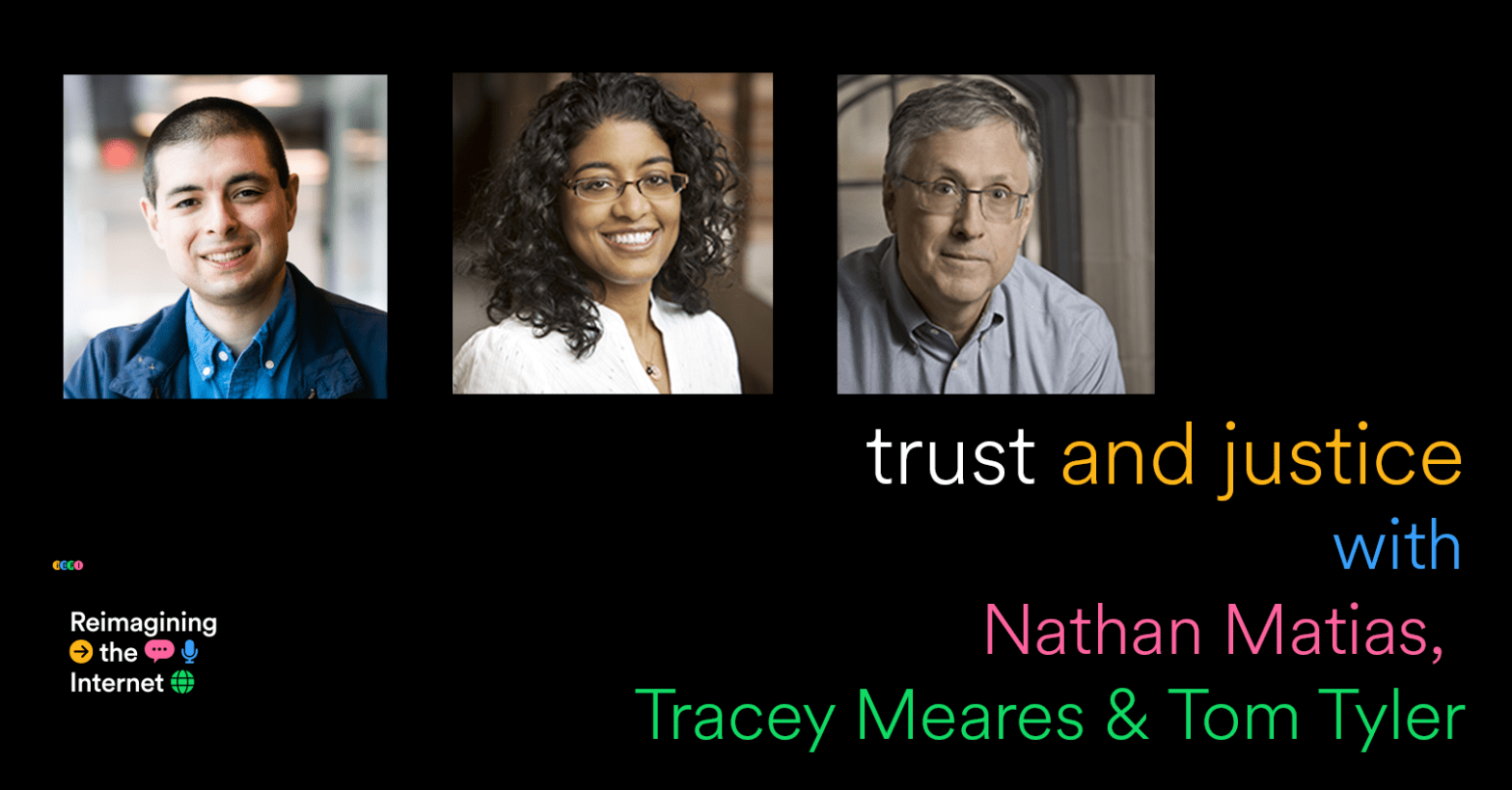

Trusting justice means making it feel meaningful—people have to trust that justice systems are themselves just. To conclude our miniseries on Trust, we talk to Nathan Matias about how exactly people lost trust in Elon Musks’ Twitter, and revisit our recent interview with Tracey Meares and Tom Tyler about how procedural justice can convince can better local civics and online communities by making people feel like they have meaningful say in the process.

Nathan Matias is an assistant professor at Cornell University where he leads the CAT Lab, which conducts research on community governance in social media groups.

Transcript

Ethan Zuckerman:

Hi everybody, welcome back to Reimagining the Internet and I’m joined once again by our producer Mike Sugarman. Hi Mike.

Mike Sugarman:

Hey everybody, glad to be back.

Ethan Zuckerman:

This is our final planned episode in our mini-series about trust and today we’re going to be talking about platforms.

Mike Sugarman:

Wouldn’t be the first time we’ve talked about social media platforms on this show.

Ethan Zuckerman:

And it won’t be the last. Mike, how is this episode going to be different?

Mike Sugarman:

Well, I think we’re really asking this question of what happens when users stop trusting platforms. There’s a bit of a Twitter exodus happening right now, and clearly what’s happening there is that lots of people who were using Twitter just don’t trust it anymore.

Ethan Zuckerman:

Right. They don’t trust it to continue to work very well, they don’t trust it to be a safe place. I also think they just don’t trust Elon Musk.

Mike Sugarman:

We see a decent proportion of people moving from Twitter to Mastodon, from what the European regulators have taken to calling “Very Large Online Platforms” to the Fediverse. We’re going to be talking a bit about if there’s a reason to trust federated social media platforms over this so-called VLOPs.

Ethan Zuckerman:

That’s the very elegant acronym for “Very Large Online Platforms” by the way.

Mike Sugarman:

VLOPs… Sounds like something you test wastewater for. Ok Ethan, why should we care about people moving away from VLOPs?

Ethan Zuckerman:

One of the things that Twitter has historically been very good for is being what we might called a big room. It’s a platform that can support hundreds of millions of people all having more or less the same conversation. And there’s something really important about that. If you want to start a social movement, if you want to promote something like Me Too or Black Lives Matter, it’s really important to be able to reach that huge audience. And one thing we may want to think about as people are moving away from V-Lops and towards the Fediverse is whether there’s a way to hold on to what’s good about that big room while getting something that’s good about the smaller spaces, which is the ability to have rules and norms that meet the needs of a community better.

Mike Sugarman:

And I think what ties all of that together is people are just willing to experiment a little bit more with alternate ways of being online. I think people are starting to realize that having billions of dollars doesn’t exactly qualify you to run a healthy, enjoyable social space.

Ethan Zuckerman:

A lot of what we’re seeing today is people just leaving those VLOPs. Our main guest on today’s episode did exactly that. Nate Matias is a close colleague of ours. He’s an assistant professor at the Cornell University departments of Communication and Information Science, where he leads the Citizens and Technology Lab, better known as CAT Lab. He’s currently at Stanford University, where he is the Lenore Annenberg and Wallace Annenberg Fellow in Communication and Segal Research Fellow at the Center for Advanced Study and the Behavioral Sciences, which is a really big deal. And he is heading, this summer, to be a visiting scholar at the Knight First Amendment Institute at Columbia University.

He’s a fantastic researcher of how communities online develop governance systems for themselves. Nate is very interested in justice, and in how communities of color deal with spaces that are hostile to them and work to mold spaces to their needs.

And he was also my first ever PhD student.

Mike Sugarman:

If I’m getting this right, you made him defend his dissertation with a sword fight?

Ethan Zuckerman:

Well, a plastic sword. He defended with honor.

Mike Sugarman:

And since justice comes up a lot, we’re going to be putting Nate in dialog with Tracey Mears and Tom Tyler. We actually originally interviewed them for this episode, but found it pretty hard to dice up their interview. We’ll be splicing clips from that past episode into this one.

Ethan Zuckerman:

Without further ado, Nathan Matias.

Nathan Matias:

I did decide to leave Twitter largely because I was not confident in its safety and integrity. That was at a point when Twitter had let go many of its safety staff, its leaders in Infosec had left and were concerned two factor authentication, which is a fundamental part of basic security, was breaking on Twitter at that point. And there was a real question that anyone on the platform—particularly anyone who was at risk of facing harassment—had to face. Do you continue to participate on this platform that may still give you access to important networks of attention or do you manage risk and also try to convince other people to build alternatives and contribute to alternatives by moving elsewhere? And the decision I made was to leave one that I still stand by.

Ethan Zuckerman:

What were the sort of specific harms you were concerned about for people who were, for instance, being targeted by harassment, something that we know that is way too common on Twitter?

Nathan Matias:

I think a few of the harms are ones that people don’t first off think of when they think about Twitter. We often talk about the hateful and harassing speech that people are exposed to on social media that happens not just on Twitter, like when I publish articles, for example, about diversity in science—I get emails from people who are very angry about the idea that people of color might need to be scientists. So Twitter isn’t necessarily the only vector for harassment, but there were particular vulnerabilities that I was worried about related to people gaining unauthorized access to accounts related to potential impersonation, which we’ve seen a lot of, with some very high profile cases that led to massive losses of money from corporations.

And then also, I think I was concerned about the kind of bringing back of multiple actors who have had a history of inciting violence. That one, I think people have had more disagreements about because of course that’s been true on Twitter in many countries around the world, regardless of how the company sets its policies in the US, but that was also a consideration for me.

I think people often think about the moral case or the safety case and those are really important starting points for any individual we’re deciding is it safe for me to be here, should I be here? But very quickly people moved to the social question about whether moving to a new platform or new app is going to give them the same access to the relationships that they enjoyed in a particular context. So there’s this kind of collective action problem.

Mike Sugarman:

In 2016—ancient history as far as social media goes—Nathan published a crucial study of collective action on Reddit. That actually meant collective action by communities against the Reddit corporation, as part of a movement advocating for a safer, more just platform.

That study kicked off a long-running research interest for Nate — how communities self-govern on Reddit. It also speaks to a larger question people have all the time in communities, whether those are members of subreddits online or constituents in political groups offline . Do you stick around when there’s trouble and try to fix what’s wrong, or do you leave? It’s something political economist Albert Hirschman called “exit” and “voice.”

Ethan Zuckerman:

I love this book. Exit and Voice is this little hundred page book. You get the sense that Hirschman sort of knocked it out over the course of a month or so in the summer.

Basically if you are a rational economic actor and a product you like suddenly is no good, favorite falafel place, you know, just doesn’t make good falafel anymore, rationally as an economic actor, you’re going to leave and you’re going to find a different falafel place.

The truth is humans are not rational. And so you have a sense of loyalty. You might stick around because you have relationships with the guy who makes the falafel. You might also exercise your voice. You might say, “Hey man, the falafel’s not as crispy as it used to be. I don’t want to leave you. Please fix it and bring back the falafel that I love.”

Hirschman starts this as sort of an examination of economics and very quickly it turns into an examination of political science because if you don’t like what’s going on in your country, exit is usually not an option. You’re going to have to find a way to make change through voice.

Nathan Matias:

I imagine Ethan you’re referring to my work in 2015 that looked at another moment of collective action against a platform when moderators of large communities on Reddit and small communities, decided to do what they called black out their communities in protest of how the company was failing to support them on protections around hate speech. And one of the things I found in that research was that—as we expect from the social science literature—that the decision to leave or to in this case pressure the company to behave differently isn’t purely an individual decision. It depends on the decisions of others.

And I was able to show in a set of statistical models that I co-developed with Reddit communities that factors, including like how much of an overlap there is with other communities that joined the blackout, or how organized are the moderators, how much risk do they face. Those factors all influenced the decision to leave. I would expect that if we did a similar analysis of people’s departures from Twitter, we would see something similar. People are making those risk calculations, those cost benefit calculations, and also making decisions in respect to what their peers are choosing to do.

I think one of the things that I observed was that groups that have kind of tighter capacities to organize that have like stronger and more like stable leadership are more able to carry out actions against online platforms. And so I also wonder if the reason journalists and activists in some corners and academics have been able to migrate quickly is that there are institutions and pathways through which they can make collective decisions. Whereas a network like Black Twitter or some of these looser, less centralized, organized networks might actually find it more difficult to make a migration because there’s no set of people who could say, “Hey, our scholarly society is setting up a server here, now everybody join.” That would be a good hypothesis for someone to explore in empirical work.

One of the things that I’ve been inspired by and have other complicated feelings about is this phenomenon where people who are part of minoritized groups will often head to and exercise leadership in spaces that they don’t choose because that’s where the flows of attention are.

So I’m thinking, for example, about a man named Jefferson who moderates a subreddit called Black Fathers. And you can read his story and numerous journalists have interviewed him. And Black Fathers used to be a subreddit for white people to make really cruel jokes about Black parenthood. And Jefferson looked at that and it was just a terrible thing and like as a father himself. And so he has carried out like this multi-year endeavor to transform that space, eventually earning the position of head moderator for that space and turning it into this conversation that is actually quite celebratory and affirming of Black fatherhood and black culture.

And I think we see that over and over again where due to how algorithms send people due to the prejudices of dominant cultures, certain spaces received more attention. Like you think about, you know, danah boyd and collaborators’ work on data voids and these spaces where people land because there are certain search terms. One way you can think about people like Jefferson is that they are going to those kind of gravity holes of attention and trying to transform them not because they think it’s safe but because that’s where people are paying attention.

Ethan Zuckerman:

Nathan is describing something really interesting here. First, that we shouldn’t think of people participating in communities passively, making decisions about whether or not to spend time there. In some cases, people like Jefferson decide to take an active hand in righting a wrong, and therefore working to mitigate future harm.

But the other thing Nathan just described here is fascinating as well. Jefferson didn’t just change r/blackfathers. He made it into a legitimate space that fellow black men could trust—by intervening where he saw an injustice, he did create that space where people like him might choose to spend time. He lent it a kind of legitimacy that it never had before.

Mike Sugarman:

When we had Tracey Meares and Tom Tyler on the show earlier this year, it was clear that they believe legitimacy and trust go hand in hand for communities, and that’s online and off. There’s no place for users trusting online spaces or citizens trusting governments if they don’t regard those bodies as legitimate. And people, by participating in those bodies, can transform them.

This is part of what Tracey and Tom call procedural justice. Their theory is that citizens will ultimately believe in justice systems when they feel like they have some stake in how that justice system works.

Tracey Meares:

In the law, especially in the criminal law context, we care a lot about people obeying rules and laws. In one way, we could get people to obey laws and rules is to say, “Well, if you don’t obey this law, we’ll give you a consequence. We will punish you.” If a person obeys the law because of that externalized reason, a fear of punishment, then you wouldn’t say that the person has internalized the value of obeying the law.

If someone obeys a rule because they think the rule is legitimate or the person who has asked them to do something is legitimate, then we would call that motivation an internalized one.

And so, the research that social psychologists have done over time is to show that when you’re looking at legitimacy as a basis for compliance, turns out that in so many studies, procedural justice is one of the most important reasons why people will confer legitimacy on a rule or on a legal authority.

So, what is procedural justice, which was your first question. Again, the research shows that people key in on some four factors when coming to conclusions, as I was saying before, about the legitimacy of rules and legal authorities. And they are these. First, voice or an opportunity to tell their perspective or give their perspective on a situation or on the rule.

Second, whether they’re treated with dignity, respect, concern for their rights, listen to, being taken seriously, which of course is sort of the flip side of voice. Third, people are looking for indicia of fair decision making. That is, are there factors that show them that the decision maker is coming to decisions on the basis of facts? Are those decisions transparent? Is the decision maker free from bias and so on?

And then last, people are looking for indicia of trustworthiness. They want to be able to expect that a decision maker is going to treat them benevolently in the future. One way of thinking about that last factor is something like, and this is what we’ve seen in the context of the criminal legal space, in their interactions with an authority, people want to believe that the authority they’re dealing with believes that they count, and they make that judgment based on the ways that that authority is treating them.

Ethan Zuckerman:

And so, the goal here is to increase the legitimacy of institutions. I would assume also increase the trust in institutions, which seems absolutely critical at a moment where we know that trust in most American institutions is dropping, trust in the criminal justice system is dropping, particularly within communities of color. Tom, what are some sort of real world successful examples of bringing procedural justice to work? And have we seen demonstrable changes in legitimacy and trusts sort of coming from this?

Tom Tyler:

Sure. Well, our work began in the arena of criminal law. And so, we can first think about people’s relationship to authorities like the police. And what we’ve shown in a number of studies is that if people think that they are fairly treated when they deal with the police or if they’re in a community where they think the police generally treat people in procedurally just ways, as Tracey said, they think the police are more legitimate, they’re more trusting in the police, and we see three really important consequences in terms of their behavior.

One consequence is that they are more likely to obey the law, follow rules. And as Tracey mentioned, it’s not just that they follow the rules more, but they do it because of a sense of responsibility and obligation to do it. So, they’re doing it, but they’re doing it in a different way for a different motivation. And that’s really important to us when you get to what else are they doing. So, cooperation. If people feel that the authorities like the police are legitimate, they’re more likely to cooperate. So, they’re more likely to report crimes, report criminals testify in court beyond a jury.

And then for us, the most important perhaps long-term consequence is people engage in communities where they believe that the authorities are legitimate, where they see the authorities treating people in procedurally just ways. So, one of the big benefits of this approach is that it helps you to build stronger communities because people engage more, they identify more, you get stronger economic development, you get stronger social cohesion, you get more political involvement.

So, it’s a strategy for building stronger communities at the same time that you’re preventing immediate rule break behavior, that’s a big game. And you can see when we talk about the platforms that we’ve studied, that we see exactly the same point in those platforms, that we have a strategy for platforms that we think is effective in building people’s motivation to follow rules.

But corresponding to what we’re saying about communities also motivates people to want to make a more positive, constructive and civil workspace online, space to deal with communities, coworkers, whomever is on the platform with them.

Mike Sugarman:

So, participation is really important here, from the community level on up. If we want fair criminal justice systems, or really if we want our society to be a place where citizens really feel invested in institutions, we need what Tom is calling “social cohesion.”

Ethan Zuckerman:

And I think Nathan would agree with that. The issue he sees though, is that platforms don’t really act like institutions, and Elon Musk in particular hasn’t made a very good case recently that Twitter should be the place where people are finding that social cohesion.

As Nathan has made it patently clear, some people just don’t feel safe there. But he pointed out the conundrum that just leaving these platforms isn’t a recipe for creating a safer web.

Nathan Matias:

Yeah, I think that is a very real calculation that people have to make. But we can also create a world where that calculation feels less like a dilemma between multiple bad options. One of the things I’ve been thinking a lot about over the last year has been around what are the functions for the social web that are so important that we shouldn’t have them tied to individual platforms. Like people talk for example about what it would mean to break up the tech industry. Like you take Facebook or Twitter, you break it up. And one of the concerns about that is that if you break up these big tech companies, you risk breaking up, well, before the layoffs, what used to be incredibly capable and sophisticated operations for online safety and content moderation. We can argue about whether they do the right thing, but they’re performing this, like, hundreds-of-millions-of-dollars-a-year operation to try to manage safety and policy online. And so it’s not necessarily clear that if you break up that unit, you necessarily get a safer internet.

And so I’ve been thinking about what are the ways that we can imagine the different functions that have up to now been bundled into a company and reimagine them as public goods that we could stably deliver in a variety of ways. So that they survive the rise and fall of platforms that society is resilient to some of these economic policies. So that if we want to break up companies, we still get to keep some of those functions.

I think one of them is actually inclusion and that only somewhat overlaps with what companies do, like they have these customer acquisition endeavors or actually in the WikiMedia Foundation, they have a growth team that collaborates with chapters around the world and their goal is to broaden who can participate on Wikipedia. And they invest resources and organize volunteers towards that goal. And I think that’s one function that isn’t necessarily an app. It’s a combination of outreach and community building and behavioral science that is profoundly important to make a digital environment that allows a diverse group of people to talk to each other. So I think that’s one function.

I think another function absolutely has to be some combination of safety and policy. And there are, you know, there’s legal expertise, there’s policy expertise. There’s a lot of work on psychology and trauma and also figuring out how to do content moderation so it’s not sweatshop work. So that’s a really hard problem, but an important one.

I think a third one has to do with research and enabling the kinds of research capacities that allow knowledge to flow more freely and ensure that the ethics and privacy restraints that are important to hold back harmful research are in place.

Ethan Zuckerman:

Nathan, Tracey, and Tom all have a conviction that research can and should be a key part of encouraging inclusive participation. Research a very direct way to get people participating these communities and institutions not just get their voice heard, but feel like they have meaningful input in these settings. That buy-in, that participation in research as well as in governance, leads to meaningful trust.

Tracey Meares:

I think it’s useful to go back to the Facebook thing. So, we’re there, we spent, I don’t know, well over a year, and this was pre-COVID. So, we’re going to California, we’re having endless Zoom meetings, actually learning how, really learning about the company, learning how it’s organized, learning what the people do from the policy, people who are mostly lawyers, to the design people, the product people who are designers, the engineers, we talk to everybody and talking about our approach.

And so, I think part of the reason why we were able to do it, I would emphasize two things. First, that we were willing to go and listen and learn about them and take them seriously, and make clear that we were really trying to understand what they were doing, that that was really our primary goal.

Second, I think, and this might be a little bit self-congratulatory, but we offered them something. We offered them this perspective that they hadn’t thought about, that they could see was useful to them. And in that sense, if I’m right about the second one, it’s an example that we’ve seen again and again.

You might think that the police wouldn’t really welcome this approach because they’re used to being the authoritarians and want to tell everybody what to do or order people, but then offer them this perspective. And they think, wow, not only am I glad to hear it because I think it will help me better achieve my goals, but actually I want to be treated that way too. And so, we see it again and again.

And with Twitter, we basically repeated this process. We go to Twitter. We need the people. We learn all of the things. And I think because we went in with an attitude of trying to learn and help as opposed to an attitude of we have a study we want to do, they were both more willing to welcome us in and listen to us and also were happier. I don’t know if I can say happy, happier to support their research. You think that’s fair?

Tom Tyler:

I think, Ethan, that a lot of people resort to sanctions, threats and so on because they can’t think of anything else to do. They say, like police, how am I going to get people to follow my orders? Well, I’ll threaten. But if you offer them an alternative where you could also gain cooperation and people wouldn’t hate you, they wouldn’t mistrust you, they wouldn’t be angry at you and you would feel better about yourself because you would feel that you’re treating people the way you’d like to be treated, people see that that’s, well, actually a better alternative.

And so, then our goal is to show them, and there’s a lot of evidence that it will actually work, which I think is why we’re emphasizing the research is we’re not just saying do it because it’s a good idea. We think it is a good idea, but we also are very clear in showing you and it will achieve the goals that you want to achieve with these additional benefits.

Mike Sugarman:

The thing about institutions is that you have to amend and modify them, but Nathan points out that building new online spaces offers a really promising opportunity to bake in the participatory processes that foster trust.

Nathan Matias:

And in my personal case, I’ve chosen to participate in a Mastodon server that is a co-op. And so we pool our resources and it’s social.coop and we make collective votes. There are conversations about things. Not everyone wants to be in 100 meetings about the small details. But one of the things I really was impressed with by this particular message on instance was that in addition to caring about our servers and having a volunteer team of moderators with clear policies, there is a conflict mediation team so that it’s not just about removing content but if you have a serious argument with someone, you are supported to engage in a thoughtful and respectful way, that’s something that very few platforms offer. And then on top of that, the community has been voting to invest resources from our pool funds to build and support some of the social infrastructure of the Fediverse. And I realize that that’s just one server. It’s just several hundred people. But I’ve been encouraged to see what can happen when instead of having the business model purely come from advertisers and when people of goodwill are able to pool their resources, what’s actually possible. Again, that’s not the majority of Mastodon, but it’s been very encouraging.

I think there’s a very different picture at the ecosystem level. I think I’ve tended to see the same patterns unfold for Mastodon that we’ve seen and parts of the Fediverse that we’ve seen for open source more generally, which is like tendency toward techno solutionism, a lack of investment in like the social infrastructure parts, ongoing debates over whether diversity matters and what that might mean. Like the reality is that computer science and the cultures that create and maintain the capacity to create technology are incredibly skewed towards a kind of white male European culture and have a history of setting aside social concerns as secondary. And that’s something that is deep in the DNA of the tech industry. And I think it’s something that will require transformation in any structural arrangement, open source or not, for social platforms to actually be inclusive and safe and serve the common good. So those fights don’t disappear just because it’s not Twitter.

Ethan Zuckerman:

Part of what I am very concerned about with Mastodon right now is the rise of super servers: Mastodon.social, which is leaning towards millions of members. I’ve really never seen a community of millions of members survive without very significant investment in Trust and Safety. And whether it falls apart because of CSAM or SPAM or scams or impersonation, I do feel like we’re living in a little bit of a golden moment before these very hopeful new networks start showing some dark sides.

Is there work that you are thinking about doing as a scholar and activist in this space in sort of trying to ensure that we have a move to a better future rather than, you know, a future where we find ourselves quite quickly replicating some of the same problems that we’ve seen in existing social media systems.

Nathan Matias:

The short answer is yes. I think you’re absolutely right that these are huge risks for, and I should be clear, I think that we can expect some amount of concentration. That’s just how society works. That we see centralization in concentration to some degree in most things where people have choice. And that is also a problem for exactly the same reason you described. And I think that it’s going to depend on whether the Fediverse and Mastodon individual servers and communities are able to build some of these capacities that I described. I’m aware of a number of efforts to do so and I think they can’t come soon enough.

At CAT Lab there are I think two parts of this picture that we have focused on contributing to over the years. First is this the kind of moderation and trust in safety work, where we’ve spent years studying and collaborating with and trying to understand the work of volunteer moderators and how to support them to be effective and transparent in their work. And there needs to be a lot more work in that area.

And I think there also need to be new kinds of organizations created. I’ve heard calls for co-ops. It could be any number of institutional forms that can provide some of the trust and safety work in equitable ways. I think that’s, if someone were able to found organizations that are less ultimately predatory than like turned out to be, that they equitably distribute the labor, but in a way that’s actually fair, I think that could be huge. That’s needed desperately right now. It’s also needed desperately in the commercial world because some of these companies are shutting down due to the legal threats and the revelations of how badly they’ve treated people. So I think that’s one thing that is needed across the board.

The other part is research, that we see the Mastodon network do exactly what the tech industry is doing, which is to talk about features and big policy decisions as if they are design decisions and as if you can kind of intuit them by having personal experiences. And the reality is that decisions you make about like how to implement quote tweets or who should receive messages or exactly how to organize the moderation experience. Those are all questions that should have broad input in a structured way and also that should be tested, right? If there are ways that we can reduce hate speech or effectively manage or prevent some of the CSAM that’s distributed on any platform, we should base those decisions on evidence. And at the moment, I think there just isn’t that research capacity within the Fediverse, which is why one answer could be the kind of citizen science that CAT Lab has been doing with Reddit communities, with Wikipedia and with folks on Twitter for the last few years.

Ethan Zuckerman:

And taking a platforms’ rules seriously probably relies on believing that those rules are there for a good reason, and actually do something.

Tracey Meares:

Depends on what you think social media is, I mean, dating apps, travel apps, the whole spectrum we’ve had conversations with. What we know is that when it comes to content moderation, these platforms tend to try to address those problems with rules and policies articulated by lawyers that emphasize what we’ve been talking about, a kind of deterrence strategy to the point where recently a lot of the platforms didn’t even publicize the rules that they expected people to obey.

So, when we first started working with Facebook, for example, their rules, the rules that they expected people to obey weren’t even published. So, that there’s no sense in which people could possibly be notified of the law.

There’s no way of knowing it. You wouldn’t be notified when you violated and you were kicked off the platform for 12 hours or whatever it was. So, in the work that we’ve done, what we’ve noticed is that the product itself turns out to be a much more important space for encouraging the kinds of interactions that people want to see with respect to content moderation than the rules that will potentially be violated.

That means if it’s the product, then it’s the designers that really matter more so than the lawyers. And in order for the designers to get this done, they need to have engineer time. And the engineers, of course, they’re also operating with this sort of pop deterrence kind of, they’ll build the thing based on these rules of about force and consequence rather than procedural justice, trust ideas.

And so, once those kinds of things, it’s kind of thinking about, I’ve used this analogy, a car. If car is the thing that people are worried about, the standard content moderation will be car bad, get car off road, period. Ban car. Whereas the design approach would say, “Well, cars can be bad, cars can be good. They help people, but they also can kill people.”

So, what we should do is design the space in which the car is operating so that it does its best, lights on the highway, rumble strips, four-lane highways rather than two-lane highways and so forth. And so, with that metaphor, we’ve found some ideas that we know or we seem to know can encourage better interactions, like the group thing, I think. Yeah.

Tom Tyler:

Well, and I think the second part of this that goes along with what Tracey said is many platforms have also been very legal in being reactive. Like the studies that we did were after people broke a rule, but why would you wait until after people break a rule to do things, help them to be better.

So, we have a whole series of things that you could imagine you could do, like when people join a platform. You could imagine a process of talking to them about what civility might mean. Think about all these principles of respect for the other people and your wellbeing. You want other people to feel as safe and secure as you feel. How can you do that?

And then both periodic messaging were like we did in Facebook, but much more effective, like ongoing messaging. And the kind of guardrails that Tracey mentioned where some people are already doing this research, like SIREN is doing this research on when people are veering in the direction of saying something that might be bad, they get a message. Like, do you really want to save that or think about what this might mean. How would people feel about this?

So, all of that can be proactive. And ideally, if we did all those things in the design of the platform, you would then not see so many problems come downstream. And the example Tracey mentions about Nextdoor is when people join a group telling them, here’s some rules for civil discussion. Here’s some principles that people use to keep their conversations positive. Use those rules, like treat people with respect or listen, don’t argue, things like that.

Ethan Zuckerman:

Nathan brought us in for a landing with a very provocative note. Communities always have growing pains, and social networks often find themselves in a make-or-break moment when they start to scale up significantly. That’s when the difficult questions about moderation come up, when tricky decisions have to be made about how to govern this large space that used to be an intimate one.

But Nathan pointed out that this kind of growth is often a positive. Communities grow when people trust them, and questions about the community’s health can ultimately make that a stronger and safer place for the people who choose to participate.

Nathan Matias:

So yeah, my hope is that we’ll see more people excited to test ideas. And this comes back to the scale question that you mentioned, Ethan, because I think for smaller communities, it can feel like things are more manageable. That may or may not be true, but that’s often how people feel. And communities and platforms tend to really start to feel the pressure once they get larger numbers of participants, which is a sign of their success, that people see a particular place as a safe place or as an interesting place and more people come and that can overwhelm leaders. But that pressure also can be a force for systematizing, for making processes more fair, more efficient.

So it’s not always the case that larger necessarily means worse. It does make you more of a target. It means the burdens you bear are more challenging. It means that you have a more diverse, hopefully group of people who have more conflicts, but it also pushes you towards hopefully more systematization, more transparency, accountability and turning to evidence and best practices, which I hope we’ll see more of across the Fediverse.

Mike Sugarman:

So that does it for our series about trust. And we’ve talked about a lot of different things: gamer guilds, international money laundering, policing, content moderation. I think the reason we’re even having these conversations in the first place is right now, people are doing a lot of experimenting online. Often, they’re responding to systems or institutions that they’ve lost trust in, or ones they didn’t trust in the first place.

But there’s a reason we’re wrapping up with Nate, Tom, and Tracey. They, like we do, believe that there’s value in active social and political engagement. As Molly White pointed out in episode 2, a lot of people who are trying to stoke distrust in institutions are also quick to encourage people to take big risks they might not understand the real costs of.

Ethan Zuckerman:

My takeaway from these conversations is that trust is a process. The Internet is still new enough that it’s not like a bank, something we trust because it has a big building with marble floors and heavy vault doors. When we trust the Internet, it’s because there are processes underway that lead to communities that feel safe enough to welcome our participation. Sometimes that trust comes from the fact that we’re involved in governing and steering those communities. And just like trust in a bank could be undermined by a robbery, the many forms of social corrosion are always eating away at the trust we develop online. The only thing we can do, I suspect, is keep trying to build systems worth trusting and work to earn the trust of people we share spaces with online. Frankly, the stakes couldn’t be much higher: the internet is where much of our public space lives in 2023. If we can’t build online spaces worthy of our trust, democracy itself is at risk.

Mike Sugarman:

And thanks for taking a chance with us while we try something pretty new with it. We’d love to hear how you felt about it, so go ahead and shoot as an email at info@publicinfrastructure.org. We will probably try more episodes like these in the future.

As always, be sure to leave us a review on your podcasting app of choice, and if you like what we’re doing, tell your friends and colleagues about it. Thanks as always for listening. I’m Mike Sugarman.

Ethan Zuckerman:

And I’m Ethan Zuckerman. This is Reimagining the Internet.